Inference Never Sleeps

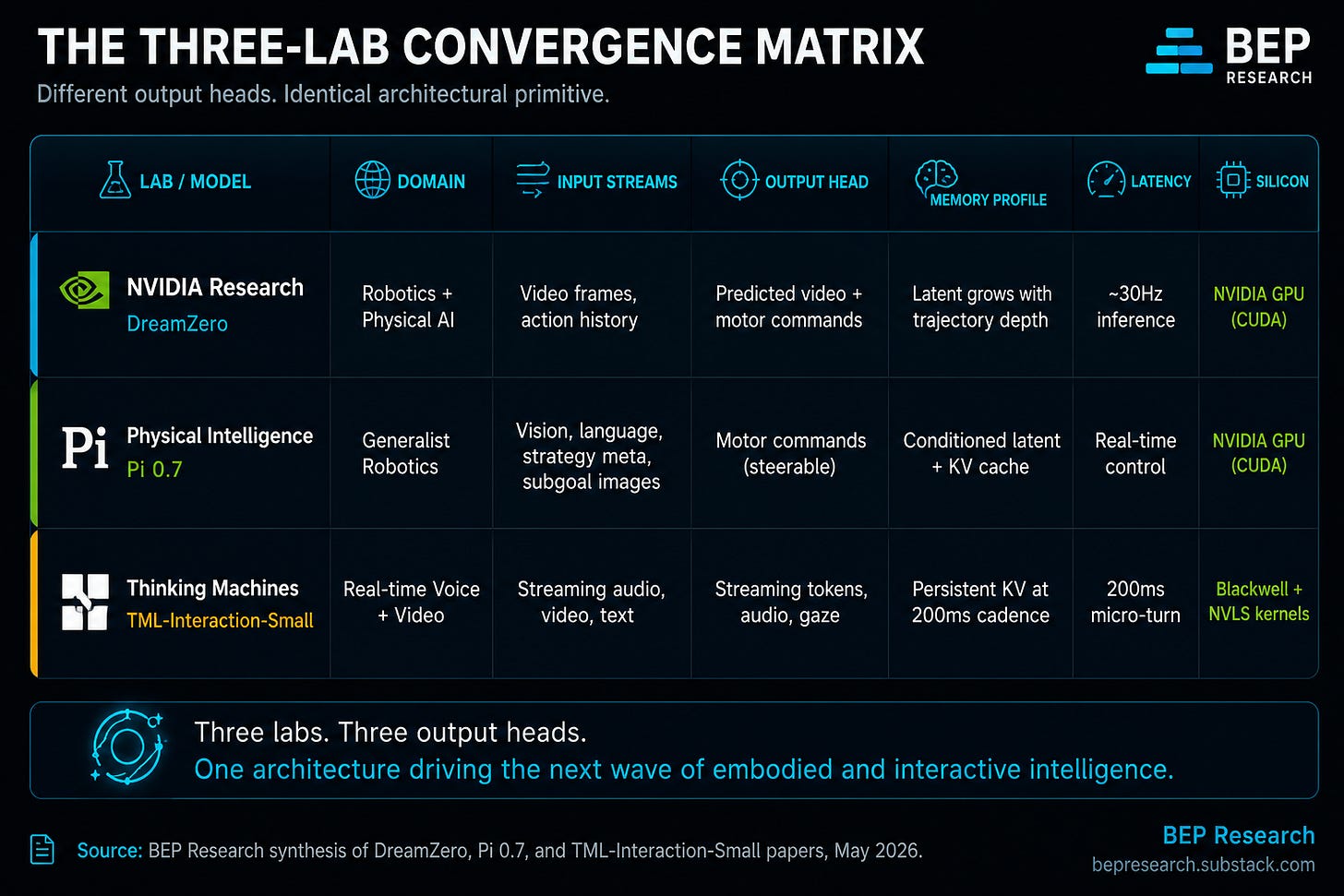

Three frontier AI labs just shipped the same architectural bet in five weeks. NVIDIA, Physical Intelligence, Thinking Machines. DreamZero, Pi 0.7, TML. The market is the last reader.

Three Labs, One Architecture

AI is moving from turn-based, harness-bolted, reactive architectures to continuous, native, predictive ones grounded in time. The model no longer waits for the user to finish typing before it starts thinking. Robots no longer pick the next action one observation at a time. Voice agents no longer take turns. The whole system runs on, all the time, perceiving and acting in the same continuous loop. Inference Never Sleeps.

That is the architectural bet. Three frontier AI labs just shipped the same version of it in five weeks, from three different directions.

Horace He, an engineer at Thinking Machines and formerly a core contributor to PyTorch, posted the cleanest framing of what Mira Murati’s team just shipped: “Model intelligence has exploded, causing the bottleneck to be human↔AI bandwidth.” Same memory-wall logic BEP has been mapping since The Memory Wars, applied to the human side of the loop. Disclosed by an engineering insider, in public, on the day the model shipped.

NVIDIA’s DreamZero made the recipe concrete in February. As I wrote in The World Model Reckoning: “DreamZero jointly predicts video and actions in the same diffusion forward pass... the future in pixels, and the robot executes based on that dream.” That was the GPT-2 moment for Physical AI. Physical Intelligence’s Pi 0.7, released April 2026, is described in the paper as a “steerable generalist robotic foundation model” conditioned on multimodal prompts. Thinking Machines, Mira Murati’s lab, shipped TML-Interaction-Small on May 11, 2026 with a different vocabulary but the same insight: “For interactivity to scale with intelligence, it must be part of the model itself.”

Three labs. Three problem domains. One architectural recipe. That convergence is the thesis.

Turn-Based Is the Harness. Continuous Is the Architecture.

The first instinct on these three releases is to call them faster reactive systems. That framing is correct but incomplete. The deeper shift is rejecting the turn-based primitive every frontier model has been built on for the last decade.

Thinking Machines (TM) names it directly: “Today’s models experience reality in a single thread. Until the user finishes typing or speaking, the model waits with no perception of what the user is doing or how the user is doing it. Until the model finishes generating, its perception freezes.” Their solution is a 200ms micro-turn architecture that streams audio, video, and text simultaneously. The model perceives and responds in the same continuous loop.

Horace He extended the framing mechanically the same week: “Hybrid systems like DRAM kv-cache + SRAM weights (interaction models + autonomous agents) that can get the best of both worlds.” The hybrid he names is the exact split TML-Interaction-Small validates in production. A continuous-perception interaction layer running 200ms micro-turns. An asynchronous background reasoning layer that keeps the deeper thinking in a slower loop.

Disclosed by an engineering insider. In public. On the day the model shipped.

The architectural recipe is no longer disputed inside the labs.

This is the same primitive as DreamZero. A VLA (vision-language-action model, the standard reactive architecture) picks the next action from the current observation, one turn at a time. A WAM (world action model, predictive by design) rolls counterfactual trajectories forward across time, scoring them continuously as new sensor data arrives. Pi 0.7 does the same thing from the policy side, conditioning on subgoal images and strategy metadata to “steer the model precisely to perform many tasks with different strategies.”

TM cites the precedent explicitly: “Some domains take such interactivity as a given. The physical world demands that robotics and autonomous vehicles operate in real time.”

Tesla validated the pattern in cars two years before any of the labs caught up.

Every Tesla running v12 or later is a continuous world-model rollout. End-to-end neural net inference at highway speed, on a vehicle the customer already paid for. The Continuity Convergence is the automotive architecture pattern applied to everything else. Mira Murati’s team built the language-domain version of what NVIDIA and Physical Intelligence built for robotics. The Bitter Lesson applied to interaction. Hand-crafted harnesses lose to learned end-to-end systems.

Turn-based is the harness. Continuous is the architecture. Inference Never Sleeps.

Same abstraction, three entry points. WAMs provide predictive world dynamics. Steerable policies provide controllable behavioral interfaces. Interaction models provide continuous bidirectional perception. The shared primitive is continuous inference grounded in time, not call-and-response. The recipe is the same one NVIDIA has been co-designing the rack-scale platform for since GTC 2026: GPU plus LPU plus Vera CPU plus DPU plus NVLink plus Dynamo. The hardware was ready before the model paradigm asked for it.

The thesis slogan: Inference Never Sleeps. The named framework: The Continuity Convergence. Below the divider: three worked deployment scenarios (humanoid fleet, continuous voice agent, screen-watching coding agent), the unit-corrected token math, the memory wall that just got three new axes, the six-layer investment map with the centerpiece insight nobody is sizing correctly, and the three named risks that could break the timeline.