The World Model Reckoning

Text Was Just the Beginning. Video and Robotics Are Next.

Apologies for the second email for the day but my feed is nothing but Seedance videos right now and you need to connect the dots fast! I also wanted to make sure you got the correct discount link for the subscription as Substack had an outage this morning.

Jerry and George from Seinfeld beating each other up. Jackie Chan martial arts movies generated from text prompts. A woman in traditional Chinese dress dancing—movements so fluid you cannot tell it is synthetic. Indie filmmakers in China have gone “full insane mode.”

Less than a year ago, you could detect AI video by counting fingers. That forensic technique lasted maybe 14 months.

Seedance 2.0 is currently only available in China, running on ByteDance’s infrastructure—NVIDIA’s latest chips in Singapore data centers. But the videos flooding global feeds today show where this is headed. We are at the very beginning.

And last week, Jim Fan—senior research scientist at NVIDIA—announced DreamZero, a 14B parameter World Action Model that enables robots to perform tasks they were never trained on. Zero-shot generalization. The GPT-2 moment for Physical AI.

These are not separate stories. They are the same story. Both are world models predicting the next physical state. Both are memory monsters running on NVIDIA silicon. And both point to a massive reordering of who wins and who loses in the next decade.

The Second Paradigm Shift

Matt Shumer published a piece this week called “Something Big Is Happening.” He describes watching AI go from “helpful tool” to “does my job better than I do” over the past year. He builds apps by describing what he wants, walks away for four hours, and returns to find the work done. No corrections needed. He writes: “I am no longer needed for the actual technical work of my job.” Boy, did he scare a a lot of people, got close to 40mm views on X.com.

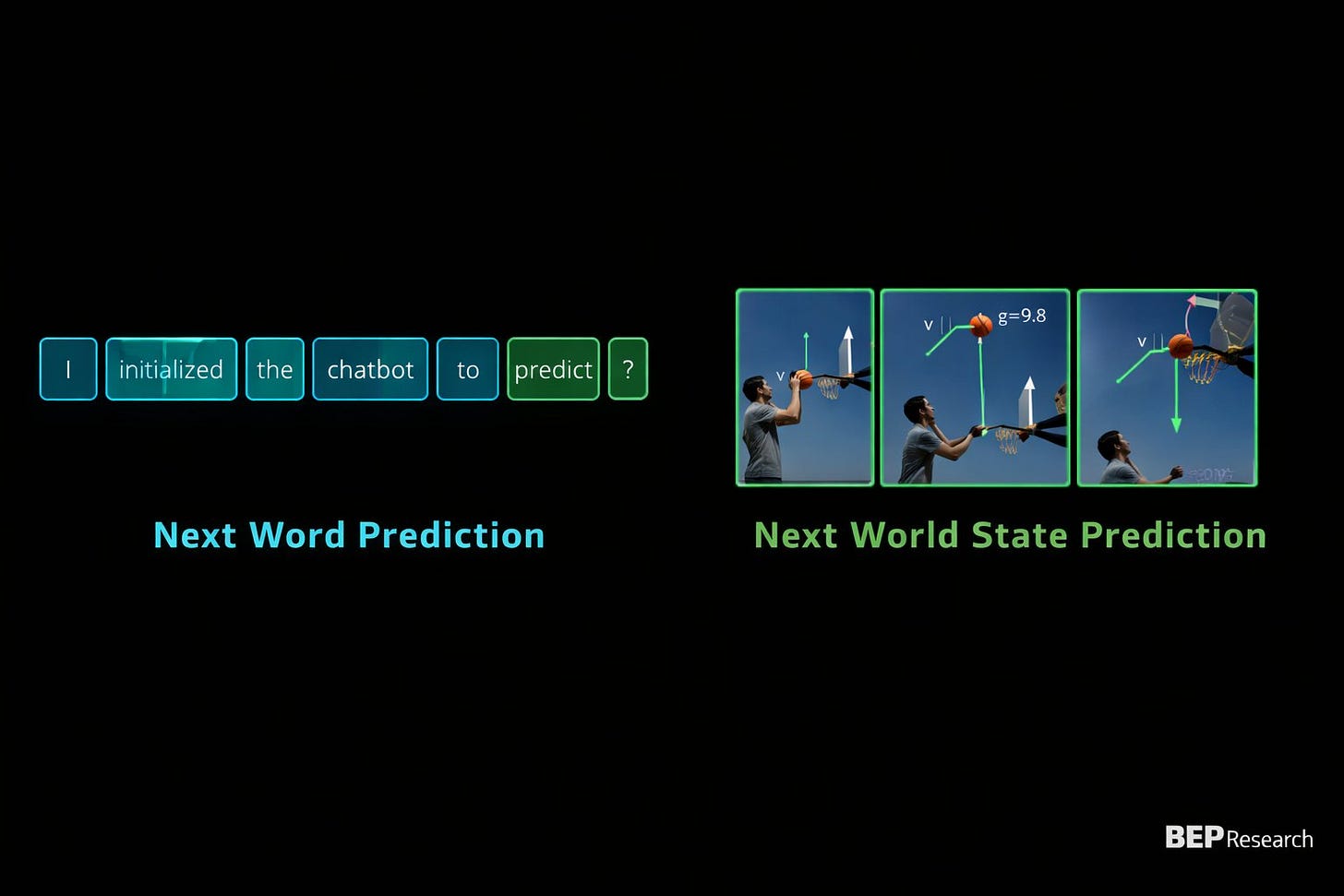

Matt is describing the first paradigm: next word prediction. Language models that write code, analyze contracts, draft briefs, build financial models. GPT-5.3 Codex helped build itself. The feedback loop is accelerating. Dario Amodei predicts 50% of entry-level white-collar jobs eliminated within one to five years.

But there is a second paradigm emerging underneath the first. Jim Fan’s framing:

“Next word prediction was the first pre-training paradigm. Now we are living through the second paradigm shift: world modeling, or ‘next physical state prediction.’ Very few understand how far-reaching this shift is.”

The first paradigm compresses human knowledge into statistical patterns over text. It is eating software engineering, law, finance, analysis—anything that happens on a screen.

The second paradigm is different: learnable physics simulators. World models capture counterfactuals—how would the future unfold differently given an alternative action? This is not knowledge retrieval. This is imagination constrained by physics. It is coming for everything that happens in physical space.

Matt’s software engineers are losing their jobs to the first paradigm. The second paradigm comes for the physical world—entertainment, robotics, manufacturing, anything involving atoms instead of bits.

World models allocate parameters to physics—to understanding how reality evolves under intervention. And that changes everything about what can be generated, who can generate it, and what protections exist for those who created the original content.

DreamZero: Robots That Dream

DreamZero inverts how robots traditionally learn. Vision-Language-Action models process vision through a language backbone, then decode actions. DreamZero jointly predicts video and actions in the same diffusion forward pass. The world model “dreams” the future in pixels, and the robot executes based on that dream.

The discoveries that matter for investment frameworks:

Zero-shot generalization: Robots performing tasks they were never trained on. Emergent capability from scaling. This is the GPT-2 moment—capabilities appearing that were not explicitly programmed.

Diversity over repetitions: WAMs learn best from diverse data, not repeated demos. This breaks the assumption that robotics requires massive proprietary demonstration datasets. YouTube-scale video becomes the training corpus.

X-embodiment via pixels: Robot-to-robot AND human-to-robot transfer. 55 trajectories (~30 minutes of teleop) to adapt to new hardware. Pixels become the universal bridge—which means NVIDIA’s stack becomes the universal substrate.

The Video Slop and the Robot Arm

Seedance generating Jerry Seinfeld throwing punches and DreamZero controlling a robot arm are solving the same problem. Both encode spatial state, physics parameters, temporal dynamics, counterfactual branches. The difference is output: pixels for entertainment versus motor commands for execution. The world model underneath is architecturally similar.

Both run on the same infrastructure. ByteDance’s Seedance servers use NVIDIA’s latest chips in Singapore. DreamZero runs on NVIDIA silicon. The paradigm is the same. The silicon is the same. Only the output differs.

Both entertainment and embodiment flow from the same computational primitive: next physical state prediction. And both require the same memory-hungry infrastructure to run.

Why Silicon Must Learn to Dream

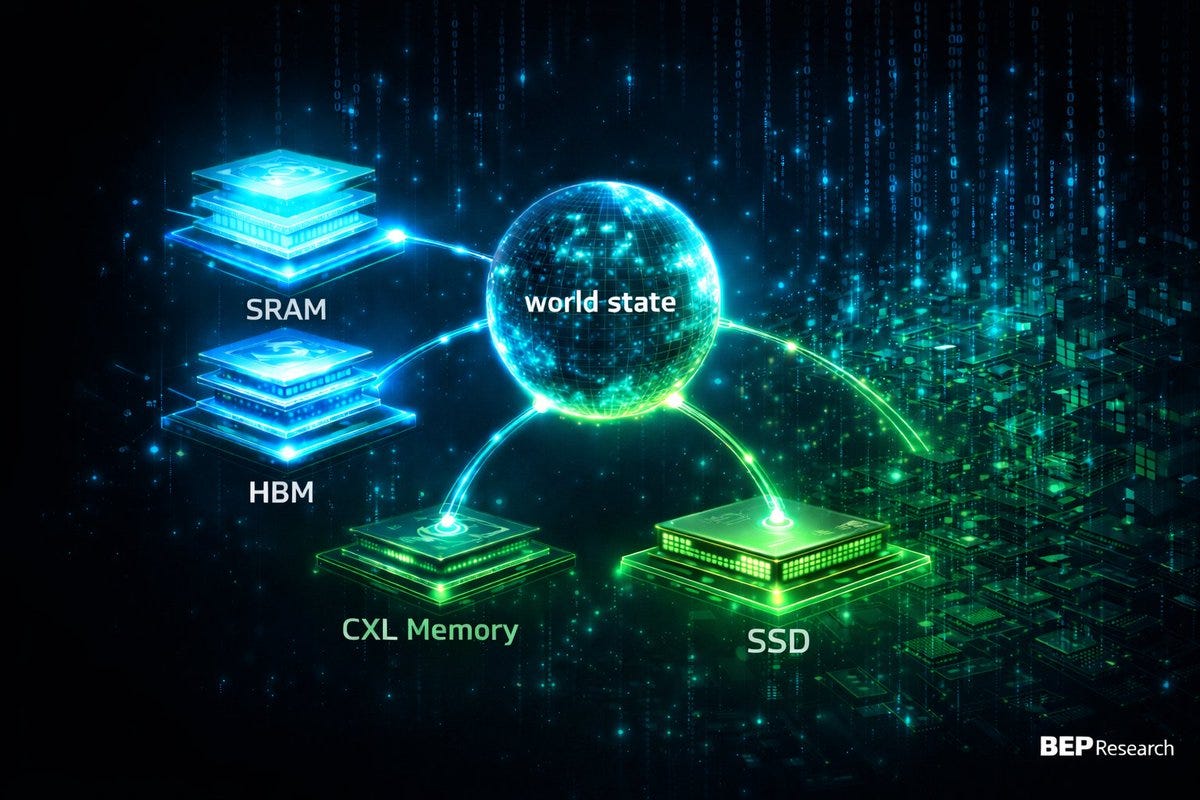

World models do not run on wishes. I wrote in The Memory Wars that video world models are memory monsters. Jim’s post validates this. DreamZero proves it. Seedance demonstrates it at consumer scale—on NVIDIA GPUs in Singapore data centers.

A world model must hold in working memory: spatial state for every object, physics parameters, temporal dynamics, counterfactual branches. All simultaneously. All at real-time latency.

A 70B language model at 128K context needs ~40GB of KV cache. That is just for text. World models predicting physical state across complex scenes? Memory scales with world complexity. A single Seedance generation—Jerry and George fighting in a coffee shop—requires the model to track two bodies, furniture, physics of impact, camera motion, lighting consistency, all simultaneously.

David Patterson—Turing laureate, co-developer of RISC—published in January 2026:

“The primary challenges are memory and interconnect rather than compute.”

Even more true for world models than language models. Physics does not compress as well as language statistics. Current silicon philosophy—high FLOPS, HBM stacks, bandwidth-optimized interconnect—was designed for training LLMs. It is a mismatch for world model inference.

Silicon must learn to dream. That requires memory architectures we have not yet built.

Winners and Losers

The world model paradigm shift creates a new investment framework. Here is who wins, who loses, and who faces existential questions.

Winners: Infrastructure

NVIDIA: They are already winning. Seedance runs on NVIDIA chips in Singapore. DreamZero is NVIDIA research. The Cosmos → DreamZero → Thor stack is explicitly designed for Physical AI. DreamZero being open source is the CUDA playbook: democratize the paradigm, own the infrastructure. The “X-embodiment via pixels” discovery means hardware diversity does not fragment the market—NVIDIA’s stack becomes universal.

HBM Suppliers (SK Hynix, Samsung, Micron): The 16-Hi HBM race is often framed as “more memory for bigger LLMs.” But world models may be even more memory-hungry. Video state, physics simulation, counterfactual branches—capacity requirements could exceed what language models need. Whoever wins HBM scaling enables the Seedance-scale generation everyone wants to run.

Memory Interface Players (Rambus, Alphawave): The memory wall is about moving data, not just storing it. Interface IP enabling higher bandwidth at lower power becomes increasingly valuable. World models need data flowing continuously between memory tiers.

Data Center Operators with NVIDIA Allocation: ByteDance runs Seedance on Singapore infrastructure with NVIDIA’s latest chips. Access to NVIDIA silicon—still supply-constrained—determines who can offer world model generation at scale. Operators with guaranteed allocation have a moat.

Winners: Live and Authentic

Live Sports and Events: When everything can be generated, the ungenerated becomes premium. Live sports—where outcomes are unpredictable and physical stakes are real—cannot be faked. Concerts, festivals, theater—experiences requiring physical presence. The premium on “you had to be there” increases as synthetic entertainment floods every screen.

Authenticity Verification: Companies that can certify content as human-created or “captured not generated” may find a market. Provenance becomes valuable when fakery becomes trivial.

Losers: IP Holders Without Moats

Traditional Studios and Content Libraries: Seedance generates Jerry and George fighting. It generates Jackie Chan movies. It learned from watching decades of content. What happens to the value of legacy libraries when anyone can generate more of it? IP that depends on scarcity—on being the only source of a particular style, character, or aesthetic—faces compression.

Stock Media (Getty, Shutterstock): Why license a stock video when you can generate exactly what you need? The Seedance videos flooding feeds today are not licensed from anyone. Stock media faces existential pressure as generation quality crosses the threshold of “good enough.”

Character IP Without Live Components: Animated characters, CGI-heavy franchises, virtual influencers—anything that exists purely as pixels can be approximated by world models trained on public data. Jerry Seinfeld exists as pixels in thousands of hours of footage. Now anyone can generate new Jerry footage.

The Uncertain Middle

Streaming Platforms (Netflix, Disney+): Do they become generation platforms? Do they license world models and let users generate personalized content? Or do they become curators of “verified human-created” content as a premium tier? The business model is unclear.

Social Platforms (TikTok, YouTube, Instagram): They already struggle with misinformation. World models generating photorealistic video at scale makes verification nearly impossible. Do they embrace generation tools? Restrict them? Label everything? TikTok’s parent company owns Seedance—they are already in the generation business.

Actors, Artists, Creators: Their likenesses, styles, and techniques become training data. Some will monetize this (license your style to a world model). Some will fight it (litigation). Most will face a world where their creative output can be approximated without their involvement.

The IP Reckoning

This deserves its own section because the implications are profound.

Seedance learned physics and motion from watching videos. Those videos were created by humans—cinematographers, martial artists, actors, directors. The world model absorbed their work and can now generate more of it on demand.

Jerry Seinfeld and Jason Alexander spent years developing their characters. Their physical comedy, their timing, their mannerisms—all captured in footage that trained a model. Now that model generates new Seinfeld content without involving Seinfeld.

This is not like text-based AI, where the output is clearly derivative and often attributed. Video world models generate pixels that look original. A Jackie Chan fight scene generated by Seedance does not credit Jackie Chan. It does not pay Jackie Chan. It simply... exists, trained on decades of his work.

Legal frameworks are not ready for this. Copyright was designed for copying, not for generation. Trademark protects names and logos, not fighting styles or comedic timing. Likeness rights vary by jurisdiction and are nearly unenforceable across borders.

Seedance runs in China on servers in Singapore. The videos go viral globally. Jurisdiction is unclear. Enforcement is practically impossible.

For investors, this means:

IP value depends on enforceability. A character that can be generated without consequence is worth less than a character protected by active litigation and clear jurisdiction.

Live rights appreciate. You cannot generate a live event. You cannot generate a concert someone attended. Physical presence becomes the moat.

First-mover generation platforms win. ByteDance has Seedance. Whoever builds the Western equivalent—the “Photoshop for video”—captures the value that shifts away from traditional content creation.

NVIDIA wins regardless. Every world model generating Jerry Seinfeld or Jackie Chan or dancing women runs on NVIDIA silicon. They are the picks and shovels.

The Investment Framework

Putting it together:

Long infrastructure: NVIDIA, HBM suppliers, memory interface players. World models are memory monsters. The silicon that enables them captures value regardless of who wins the application layer. ByteDance uses NVIDIA. Everyone will use NVIDIA.

Long live experiences: Sports rights, live events, anything requiring physical presence. Scarcity of the ungenerated becomes a moat.

Short or avoid unprotected IP: Content libraries, stock media, character IP without live components. Generation commoditizes what scarcity once protected. If it exists as pixels, it can be approximated.

Watch the uncertain middle: Streaming platforms, social networks, creator economy. Business models will evolve but direction is unclear. Wait for clarity.

Emerging opportunities: Authenticity verification, provenance certification, “human-created” premium tiers. These markets do not exist at scale yet but may emerge as generation becomes ubiquitous.

We Are at the Beginning

Seedance is only available in China. The viral videos flooding my feed are just the first wave. When this technology reaches global availability—and it will—the world model reckoning accelerates.

Jim Fan is right: 2026 marks the year Large World Models lay real foundations. DreamZero for robotics. Seedance for entertainment. Both running on NVIDIA infrastructure. Both proving the paradigm works.

The silicon will follow the demand. The memory architectures will evolve. The infrastructure winners are already clear.

The content landscape is about to be reordered. What can be generated will be generated. What cannot—live moments, physical presence, authentic human experience—becomes the new scarcity.

The world model reckoning asks us to build silicon that can dream. It also asks what remains valuable when dreams become indistinguishable from reality.

What’s Changing at BEP Research

On March 9th, BEP Research is moving to paid subscriptions.

The timing is deliberate: NVIDIA’s GTC conference starts the following week, and I will be there in person. Paid subscribers will get exclusive access to real-time GTC coverage—in-depth analysis of every major announcement, on-the-ground interviews, and breakdowns of what the roadmap changes actually mean for your portfolio. Not recaps. Real analysis, in real time.

After March 9th, free subscribers will still receive post summaries and occasional public pieces. But the deep work—the Co-Design Thesis series, investment frameworks, earnings breakdowns, supply chain deep dives, and all GTC coverage—moves behind the paywall.

Pricing

Because you have been here from the beginning, I am offering an early bird rate that disappears when the paywall goes live:

Early Bird: ~$350/year (13% off) — available now through March 9th only.

→ Lock in the Early Bird rate here

After March 9th, standard pricing applies:

Annual: $400/year

Monthly: $45/month

Founding Member: $450/year — for those who want to go above and beyond in supporting BEP Research. Founding Members get permanent recognition as the people who backed this work from day one, and will get priority consideration as I build out the institutional tier.

I will be direct: this is premium-priced for a Substack. It is priced that way on purpose. I am building institutional-quality research without sell-side conflicts, without advertising, and without the surface-level takes that dominate financial media. If even one analysis helps you size a position correctly or avoid a bad trade, the subscription pays for itself many times over. Several of you have already told me exactly that.

Institutional Access

I am also developing an institutional tier for teams and funds that want deeper engagement. I am already in conversations with several groups about multi-seat licenses that include direct analyst calls and priority Q&A. If that is relevant to your team, reply to this email and we will set up a conversation.

To everyone who has already pledged—thank you. You will be among the first to convert when payments go live, and your early support is what made this possible.

For everyone else: the early bird window closes March 9th. After that, it is $400/year.

→ Lock in the Early Bird rate before March 9th

See You at GTC

I will be at NVIDIA GTC in San Jose March 16-19. Jensen’s keynote is Monday March 16th at 11am Pacific—if DreamZero and Cosmos are any indication, this is where the second paradigm shift becomes undeniable.

Sessions I am watching: How Open World Models are Powering the Next Breakthroughs in Physical AI [S81667], Humanoid Robotics at Scale [S81645], and Rack-Scale Connectivity for Blackwell and Rubin (Astera Labs) [EX82087].

Giveaway: I am giving away a Jetson Orin Nano Super to a BEP Research reader. Subscribe so you do not miss the details.

Register for GTC 2026 | Subscribe to BEP Research

Resources

Matt Shumer, “Something Big Is Happening” (February 2026)

DreamZero Project Page (NVIDIA Research)

DreamZero Open Source Model (GitHub)

Jim Fan on World Models and DreamZero (Twitter/X @DrJimFan)

Seedance 2.0 demos: @zhao_dashuai, @EHuanglu (Twitter/X)

Patterson and Ma, “Challenges and Research Directions for LLM Inference Hardware”

The Memory Wars (BEP Research)

The Memory Wall (BEP Research)

About the Author

Ben Pouladian is a Los Angeles-based tech investor focused on AI infrastructure and semiconductors. EE degree from UC San Diego (Professor Fainman’s ultrafast nanoscale optics lab). Chairman of the Leadership Board at Terasaki Institute for Biomedical Innovation.

Follow: @benitoz (Twitter/X) | @bepresearch (Substack)

Disclosure: The author holds positions in NVIDIA and related semiconductor investments. This is not investment advice.