Top 10 Tech Predictions for 2026: Where the Smart Money Is Heading

From NVIDIA’s half-trillion-dollar order book to SpaceX’s trillion-dollar IPO—the AI infrastructure buildout enters its most consequential phase.

The AI infrastructure race is accelerating far faster than most investors anticipated. We’re witnessing $500+ billion in hyperscaler capex colliding against hard physical constraints in power, packaging, and memory—creating an unusual investment landscape where understanding bottlenecks matters more than chasing performance benchmarks.

Having spent years in the semiconductor trenches—from my electrical engineering work on silicon photonics at UC San Diego’s ultrafast nanoscale optics lab to scaling Deco Lighting’s LED manufacturing from startup to $50M+ in revenue—I’ve learned that the physics always wins eventually. The question for investors is: who’s positioned to profit from those physics constraints in 2026?

Here are my 10 highest-conviction predictions for the year ahead, spanning semiconductors, AI infrastructure, and software.

1. NVIDIA’s Rubin Cycle Extends Dominance Through 2028

NVIDIA’s competitive moat is widening, not narrowing. Jensen Huang revealed in late 2025 that NVIDIA has “half a trillion dollars in Blackwell and Rubin revenue visibility” from early 2025 through calendar 2026—a staggering forward order book that virtually guarantees dominance.

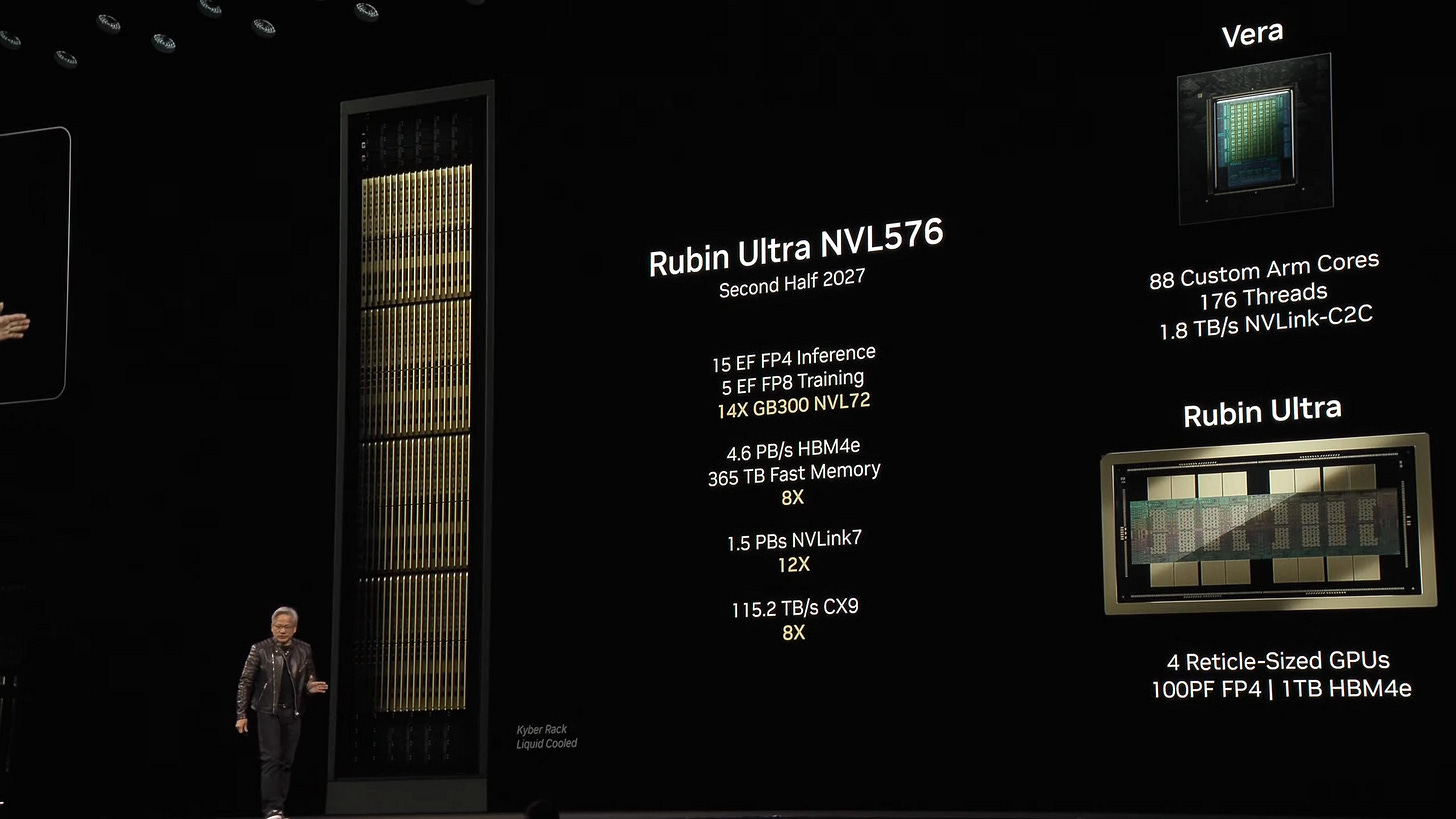

The Rubin architecture launching Q3 2026 represents a generational leap: 50 petaflops of FP4 compute (2.5x improvement over Blackwell), HBM4 memory delivering 13 TB/s bandwidth, and drop-in compatibility with existing infrastructure. The Rubin NVL144 configuration will deliver 3.6 exaflops per rack—ensuring hyperscalers remain locked into NVIDIA’s ecosystem.

Perhaps most telling is the $20 billion Groq technology licensing deal announced December 2025. NVIDIA paid nearly 3x Groq’s September valuation to acquire licensing rights to the LPU inference technology—effectively neutralizing the only credible hardware threat to their inference dominance. This mirrors the 2019 Mellanox acquisition that became the foundation of NVIDIA’s networking moat.

The CUDA ecosystem remains impenetrable: 4+ million developers, 500+ million CUDA-enabled GPUs installed, and switching costs that make migration economically irrational. AMD’s ROCm remains 3-5 years behind in tooling maturity.

Investment angle: NVIDIA’s data center revenue exceeds $250 billion in 2026 as Blackwell reaches full production and early Rubin shipments begin.

2. SpaceX’s $1.5 Trillion IPO Catalyzes the Orbital Compute Era

SpaceX has confirmed plans for a 2026 IPO targeting an unprecedented $1.5 trillion valuation—making it the largest public offering in history. On December 10, 2025, Elon Musk validated the timeline on X, responding “As usual, Eric is accurate” to Ars Technica’s report. Most intriguing: the company’s CFO memo cited funding for “AI data centers in space” as a primary driver for going public.

SpaceX’s valuation trajectory has been extraordinary—reaching $800 billion in December 2025 at $421/share, nearly double the $400 billion from July 2025. Starlink now serves 9 million active subscribers, adding over 20,000 new users daily, with revenue estimates around $11.8 billion for 2025.

The orbital compute thesis is no longer science fiction. Starcloud achieved first-in-space LLM training in December 2025—successfully training Google’s Gemma on an NVIDIA H100 aboard their satellite. China has launched the first 12 satellites of a planned 2,800-satellite AI computing constellation. Google announced Project Suncatcher with radiation-hardened TPUs launching in 2027. Jeff Bezos endorsed the concept publicly: “We’re going to start building these gigawatt data centers in space.”

The physics advantages are real: solar panels in orbit operate at 8x terrestrial efficiency, heat radiates directly into vacuum eliminating water-intensive cooling, and there are no permitting battles or grid connection constraints. Musk stated that Starship could deliver 300-500GW per year of solar-powered AI satellites to orbit—roughly 5-8x current global data center capacity.

Investment angle: SpaceX’s IPO—likely raising $30+ billion—provides the capital to move orbital compute from demonstration to production scale. The $2 billion SpaceX investment in xAI signals the convergence is already underway. Watch for a formal SpaceX-xAI joint venture announcement for orbital AI training infrastructure by late 2026.

3. OpenAI’s Model Velocity Accelerates Toward a Landmark IPO

Reports of OpenAI hitting scaling walls are premature. GPT-5 launched August 2025, followed by GPT-5.1 in November and GPT-5.2 in December—three major iterations in four months. Sam Altman confirmed $20 billion ARR exit rate for 2025.

The o-series reasoning models represent a paradigm shift. o3 achieved 25.2% on FrontierMath—a benchmark where no other model exceeded 2%. On ARC-AGI, o3 broke a five-year barrier with 87.5% at high compute. These aren’t incremental improvements; they’re discontinuous capability jumps that justify premium pricing.

ChatGPT now has 800-900 million weekly active users—a consumer moat rivaling the largest internet platforms. The infrastructure commitments are staggering: $1.4 trillion in data center deals over eight years, including $300B with Oracle, $250B with Microsoft, and the expanded Stargate Project.

The restructured Microsoft partnership actually strengthens OpenAI’s position: Microsoft lost its right of first refusal on compute, while OpenAI can now pursue third-party partnerships and government contracts on any cloud.

Investment angle: OpenAI’s IPO in late 2026 or early 2027 values the company at $750B-$1 trillion, making it one of the largest tech IPOs in history. The combination of o4/o5 reasoning advances plus GPT-6 positions OpenAI to maintain frontier model leadership.

4. xAI Hits $300B+ Valuation as the Third Force in Frontier AI

xAI’s trajectory from founding in July 2023 to $230 billion valuation in December 2025 represents the fastest value creation in AI history—and it’s not done yet.

Colossus is now the world’s largest AI supercomputer: 150,000 H100s, 50,000 H200s, and 30,000 GB200s—over 230,000 GPUs in a single training cluster. xAI built this in 122 days, compared to typical 18-24 month data center timelines. Expansion plans target 1 million GPUs by Q2 2027 through the second Memphis facility, with 1.5GW+ power capacity via the Solaris energy joint venture.

Grok 4 is legitimately competitive with frontier models: 1483 Elo on Chatbot Arena (top-ranked in thinking mode), 93.3% on AIME 2024, and native multi-agent orchestration. The real-time X platform integration provides a unique data moat—Grok has access to live information streams that other models cannot replicate.

The ecosystem integration is compelling: Grok is rolling out to Tesla vehicles (beta December 2025), with planned integration into FSD and Optimus robots. SpaceX invested $2 billion in xAI and uses Grok for mission planning. xAI for Government launched with a $200 million DoD contract in December.

Investment angle: xAI reaches $300-350 billion valuation by end of 2026, driven by Colossus expansion to 500K+ GPUs, Grok 5 launch, and deepening Tesla/SpaceX integration. Positioning for a 2027 IPO as the third pillar of frontier AI alongside OpenAI and Anthropic.

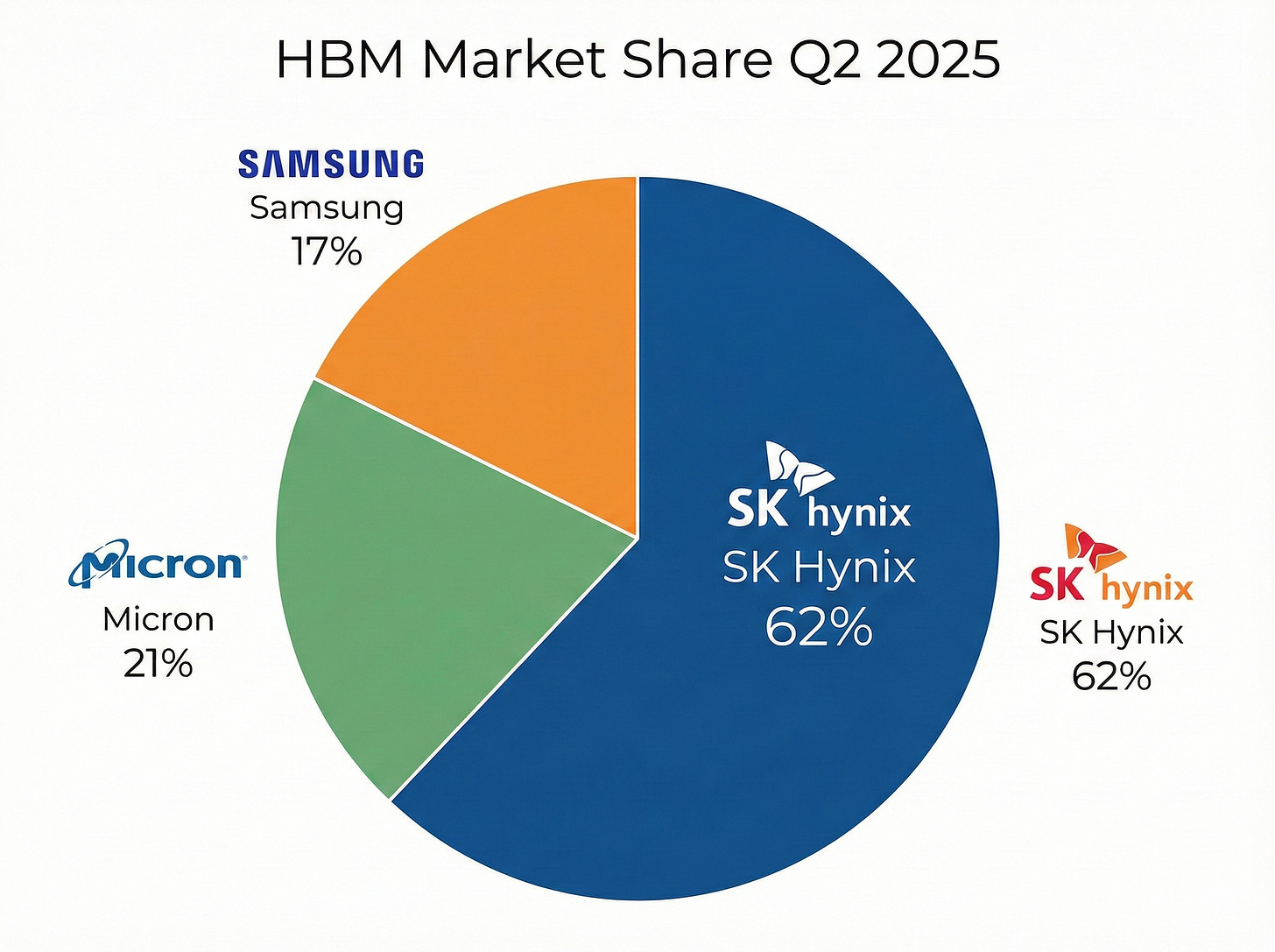

5. HBM Remains Structurally Undersupplied—SK Hynix Commands 60%+ Share

The high-bandwidth memory market has emerged as AI infrastructure’s critical path dependency. SK Hynix has executed a remarkable ascension, surpassing Samsung for the first time ever to capture 62% of HBM shipments while simultaneously becoming the world’s largest memory supplier by revenue.

HBM consumes 4x the wafer capacity per gigabyte versus standard DRAM, creating constraints even as capacity expands. Both SK Hynix and Samsung have already sold out their entire 2026 HBM capacity, with HBM3E prices rising ~20% for 2026 contracts.

HBM content per accelerator continues climbing—NVIDIA’s Blackwell platforms use 192GB HBM3E versus H100’s 80GB, while Vera Rubin targets 1TB+ HBM4E. This 5-10x memory scaling per chip generation creates demand that consistently outpaces capacity additions.

Investment angle: SK Hynix remains the clearest beneficiary. Micron’s rise from 4% to 21% share suggests meaningful upside—the company expects $8 billion annualized HBM run rate. Samsung’s HBM4 qualification for Google TPU could restore its position to 30%+ share by late 2026.

6. Power and Cooling Infrastructure Becomes the Binding Constraint—800VDC and Behind-the-Meter Win

Data center power demand is growing at a pace that overwhelms grid infrastructure. Global data center power consumption reached 460 TWh in 2024 and is projected to exceed 1,000 TWh by 2030. But the real story isn’t demand—it’s the 2,600 GW sitting in U.S. interconnection queues, more than twice the total installed power plant fleet. Average wait times have stretched to 5-7 years.

Power density is exploding: NVIDIA’s GB200 NVL72 racks consume 120-132 kW, while next-generation Kyber NVL576 racks target 600 kW—a 5x increase requiring entirely new power architectures. NVIDIA’s answer is 800VDC (high-voltage direct current), which eliminates multiple AC-DC conversion stages and delivers 5% end-to-end efficiency improvement, 45% copper reduction, and 30% lower total cost of ownership.

This is where Bloom Energy becomes critical. Their solid oxide fuel cells naturally generate 380V DC—native to the 800VDC architecture—and can deploy 50 MW in 90 days versus 5-7 years for grid interconnection. The Oracle AI factory order was delivered in just 55 days. Bloom’s $5 billion framework with Brookfield (NVIDIA’s $100B AI infrastructure partner) creates a direct pipeline to Kyber-spec data centers. I wrote extensively about this thesis in my Bloom Energy deep dive.

This is where my LED lighting background becomes relevant. At Deco, we saw how power efficiency constraints drove entire technology transitions. The same physics applies here: you can’t defy thermodynamics, you can only work around it—whether through 800VDC efficiency gains, behind-the-meter fuel cells, or moving to orbit where heat radiates into vacuum for free.

Investment angle: Bloom Energy (BE) is up ~1,000% over 12 months on the AI power thesis—watch for continued Brookfield deployment announcements. Vertiv has a record $9.5 billion backlog for cooling infrastructure. The 800VDC ecosystem partners—Delta, Eaton, Schneider Electric—benefit from the architecture transition. Behind-the-meter generation captures 6-15% of incremental data center demand (8-20 GW) through 2030.

7. Inference Captures Two-Thirds of AI Compute Spending

The industry’s center of gravity is shifting from training to inference. By 2026, inference accounts for 65-70% of AI compute spending. This reflects economic reality: a single training run produces years of inference workloads.

OpenAI’s projected $4 billion on inference versus $3 billion on training presages where the market is heading. GPT-4’s inference bill is projected at $2.3 billion annually—15x its training cost.

This shift creates openings for specialized inference silicon. Groq’s Language Processing Units deliver 300+ tokens/second on Llama 70B—an order of magnitude faster than GPUs. NVIDIA’s Groq acquisition acknowledges this vulnerability.

Investment angle: AMD’s MI350/MI400 series could capture meaningful share if software maturity closes the CUDA gap. Meta’s deployment of 173,000 MI300X units handling 100% of live Llama 405B inference validates AMD’s competitiveness. Broadcom’s custom ASIC business benefits as hyperscalers optimize inference economics.

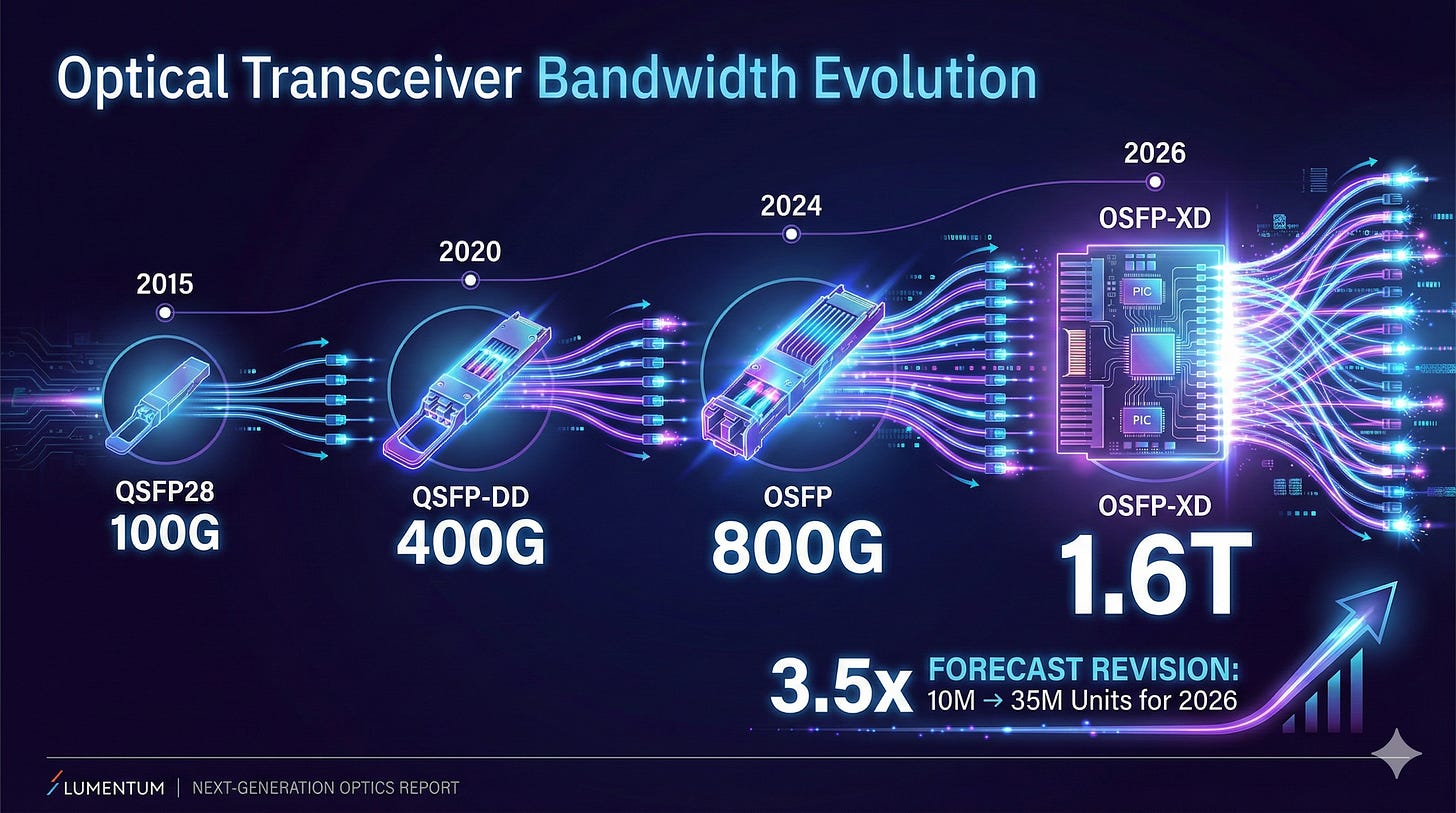

8. 1.6T Optical Networking Volume Inflects 3x Faster Than Expected

The optical networking market is experiencing a demand shock. 1.6T transceiver forecasts have been revised from 10 million to 35 million units for CY2026—a 3.5x increase. The 800G-to-1.6T transition is occurring faster than any prior generation.

From my silicon photonics work at UC San Diego—where we fabricated micro-ring resonators and studied optical interconnect physics—I can tell you that the fundamental advantage of photons over electrons at these bandwidths is insurmountable. The industry is finally hitting the limits where optical wins everywhere, not just long-haul.

Lumentum maintains 50-60% market share in 200G EMLs—the key laser component powering 800G and 1.6T transceivers. Co-packaged optics begins deployment in 2026 but won’t achieve scale until 2027-2028. I wrote extensively about this thesis in my Data Center Optics Deep Dive.

Investment angle: Lumentum’s EML dominance provides component-level exposure. Astera Labs’ 104% YoY revenue growth reflects connectivity demand. Credo’s 88% AEC market share provides a differentiated play on copper-to-optical transition.

9. TSMC Maintains 70%+ Foundry Share While CoWoS Remains the True Bottleneck

TSMC’s dominance has become structural. The company captured 72% market share in Q3 2025, with advanced nodes representing 74% of wafer revenue. Arizona Fab 1 achieved 92% yield—reportedly surpassing Taiwan sites.

The Arizona expansion has accelerated to $165 billion total investment for 6 fabs, 2 advanced packaging facilities, and an R&D center. TSMC’s 2nm entered volume production with ~15 customers designing on the node.

But the real constraint is advanced packaging. TSMC’s CoWoS capacity is expanding from 75,000-80,000 wafers/month to 120,000-130,000—still insufficient. Morgan Stanley estimates ~1 million CoWoS wafers demanded in 2026, with NVIDIA alone requiring 595,000 wafers (60% of total).

Investment angle: TSMC (TSM) remains foundational—the company manufactures 92%+ of advanced AI silicon regardless of which vendor wins. The packaging bottleneck creates opportunity for ASE Technology and Amkor.

10. Foundation Model API Pricing Collapses 70%+ as Efficiency Compounds

API pricing is deflating at unprecedented speed. “LLMflation” of 10x per year—GPT-3 equivalent performance costs 1,000x less than in 2021 ($60/million tokens → $0.06). DeepSeek demonstrated 90% cost reduction versus Western competitors.

This compression is driven by open-weights models setting price floors, inference serving optimizations (vLLM delivers 24x throughput improvement), and quantization advances enabling INT4/INT8 deployments.

The business model implications are severe. Both OpenAI and Anthropic face gross margin compression from 50-55% as pricing wars intensify. OpenAI’s $8 billion projected operating loss for 2025 underscores current economics.

Investment angle: This is primarily a risk to watch. Foundation model valuations (OpenAI at 39x revenue) assume durable pricing power that open source is eroding. Beneficiaries are enterprises gaining inference cost leverage and inference optimization platforms.

The Bottom Line

The defining characteristic of 2026’s AI infrastructure landscape is that constraints—power, packaging, memory—matter more than raw performance. Companies solving bottlenecks (Bloom Energy, Vertiv, SK Hynix, TSMC advanced packaging) will outperform those dependent on bottleneck resolution.

Three themes to watch:

The Orbital Frontier: SpaceX’s IPO with explicit “AI data centers in space” justification signals that the convergence of satellite infrastructure and AI computing has moved from science fiction to strategic priority. The physics advantages—unlimited solar power, passive cooling, no permitting—could fundamentally alter the economics of AI infrastructure.

The Inference Shift: The 65-70% inference share fundamentally changes competitive dynamics. AMD, Broadcom custom silicon, and specialized inference chips gain relevance while training-optimized solutions face margin pressure.

Efficiency as Disruption: DeepSeek’s demonstration that 18x training cost reduction and 36x inference cost reduction is achievable suggests efficiency gains could moderate the infrastructure buildout faster than capex plans assume. This is the key risk to consensus “secular growth” narratives.

The next 12 months will determine whether AI infrastructure investment represents a generational opportunity or a classic capital cycle overshoot. These predictions are designed to identify where the probabilities favor the prepared.

Happy New Year!!!!

Resources

Disclaimer: The author holds positions in several companies mentioned in this analysis. This is not investment advice. Do your own research.

Ben Pouladian is CEO of BEP Holdings and publishes investment research through BEP Research. He has an electrical engineering background from UC San Diego where he worked in Professor Fainman’s ultrafast nanoscale optics lab on silicon photonics and micro-ring resonators. He co-founded Deco Lighting in 2005, scaling it to over $50 million in revenue before exiting in 2019. Follow him on X @benitoz or visit www.benpouladian.com.

"...physics applies here: you can’t defy thermodynamics, you can only work around it—whether through 800VDC efficiency gains, behind-the-meter fuel cells..."

One thing folks might be missing about Bloom Energy is that it's not just the physics (or electrochemistry) of the energy production but also the second order physics. How can it deliver something in 55 days? It's the physics of the production of the power producing equipment. I've alluded to it a bit in my notes, but I should probably do a more detailed post about it in the future.

Also, yes, the valuation looks to be stretched but that depends ultimately on how much it can expand its market. But yeah, the margin of safety would be lacking at current prices if this AI boom runs into any hiccups.

Respectfully a lot of these plays have already been played out and at ATH for 2025. Every singe one of your plays are at ATH with insane valuations.