Bloom Energy Is Actually Getting Deployed

Wall Street thinks this is a concept stock. They’re wrong. The orders are real, the permits are filed, and the hyperscalers are desperate.

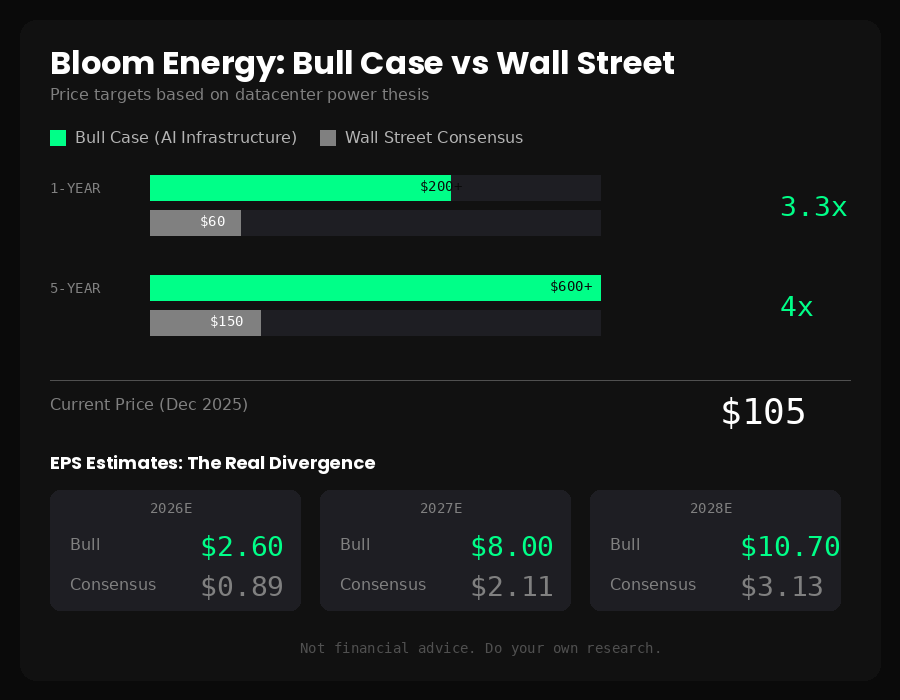

BE (NYSE) | ~$105 | 1-Year: $200+ | 5-Year: $600+

Here’s the prevailing Wall Street narrative on Bloom Energy: interesting technology, maybe someday, but nobody’s actually going to use fuel cells to power AI datacenters when you can just build gas turbines.

That narrative is already wrong.

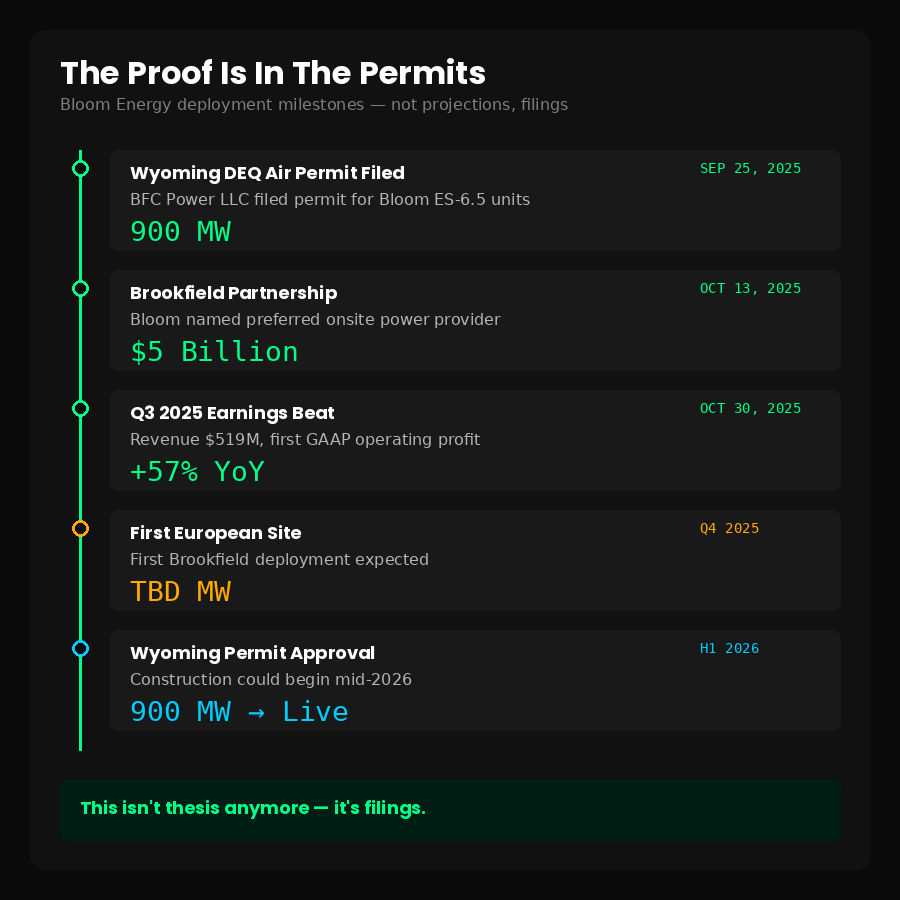

In September, an air permit hit Wyoming’s DEQ for 900 megawatts of Bloom fuel cells at Crusoe’s new AI campus. Not a press release. Not a “partnership announcement.” An actual regulatory filing specifying Bloom Energy ES-6.5 Generation Units.

In October, Brookfield committed $5 billion—real dollars—to deploy Bloom across their global AI infrastructure portfolio. They named Bloom their “preferred onsite power provider.”

Last quarter, Bloom posted $519 million in revenue. Up 57% year-over-year. They delivered an AI factory to Oracle in 55 days.

This isn’t a concept anymore. It’s a deployment.

Why This Matters Right Now

Microsoft’s CFO said something remarkable on their last earnings call:

“We are, and have been, short now for many quarters. I thought we were going to catch up. We are not.”

Short on what? Not chips. Power.

Satya Nadella put it bluntly in November: “The biggest issue we are now having is not a compute glut, but it’s power.”

This is the CEO of a $3 trillion company admitting that GPUs are sitting in inventory because they can’t plug them in. Think about that. NVIDIA is shipping. Microsoft is buying. And the hardware is collecting dust because there’s nowhere to put it that has electricity.

Amazon’s saying the same thing. Andy Jassy: “We could be growing faster, if not for some of the constraints on capacity. Those constraints come in the form of chips from our third-party partners, power constraints, and supply chains.”

The AI scaling bottleneck has moved. It’s not silicon anymore. It’s electrons.

The Grid Can’t Save Them

Here’s a number that should scare every AI investor: 8 years.

That’s the average wait time to connect a new datacenter to the grid through PJM Interconnection, the largest power market in the US. In Northern Virginia—Data Center Alley—it’s pushing 10 years.

The hyperscalers don’t have 8 years. They don’t have 3 years. OpenAI’s Sora hit 1 million downloads in 5 days. Anthropic tripled revenue in 5 months. The demand curve is vertical and the power infrastructure was built to grow at 2% annually.

New high-voltage transmission construction has collapsed to 180 miles over the past two years. Down from 1,700 miles annually a decade ago. The supply chain for transformers is so broken that Bloomberg ran a feature calling it “the device throttling the world’s electrified future.”

You can’t wait for the grid. You need power behind the meter, on-site, deployable in months not years.

Why Not Just Use Gas Turbines?

This is the bear case, and it deserves a straight answer.

GE Vernova makes excellent gas turbines. They’re proven, they’re cheaper upfront ($850-1,500/kW versus ~$7,000/kW for Bloom), and they just signed 9GW of reservations in 30 days. Crusoe itself has a turbine deal with GE.

So why would anyone pay 5x more for fuel cells?

Three reasons:

Speed. Bloom delivers in 90-120 days. A gas turbine installation takes 3-4 years by the time you permit, construct, and commission. When you’re hemorrhaging market share because you can’t deploy compute fast enough, time is worth more than capital.

Permitting. Gas turbines combust fuel. They emit NOx, SOx, particulates. They’re loud. Communities hate them. $64 billion in datacenter projects have been blocked or delayed by local opposition. Bloom’s fuel cells run an electrochemical reaction—no combustion, no emissions, 65 decibels (quieter than a conversation). They permit faster because neighbors don’t fight them.

Architecture. This is the one nobody’s talking about yet, and it’s the real unlock.

The 800-Volt Revolution

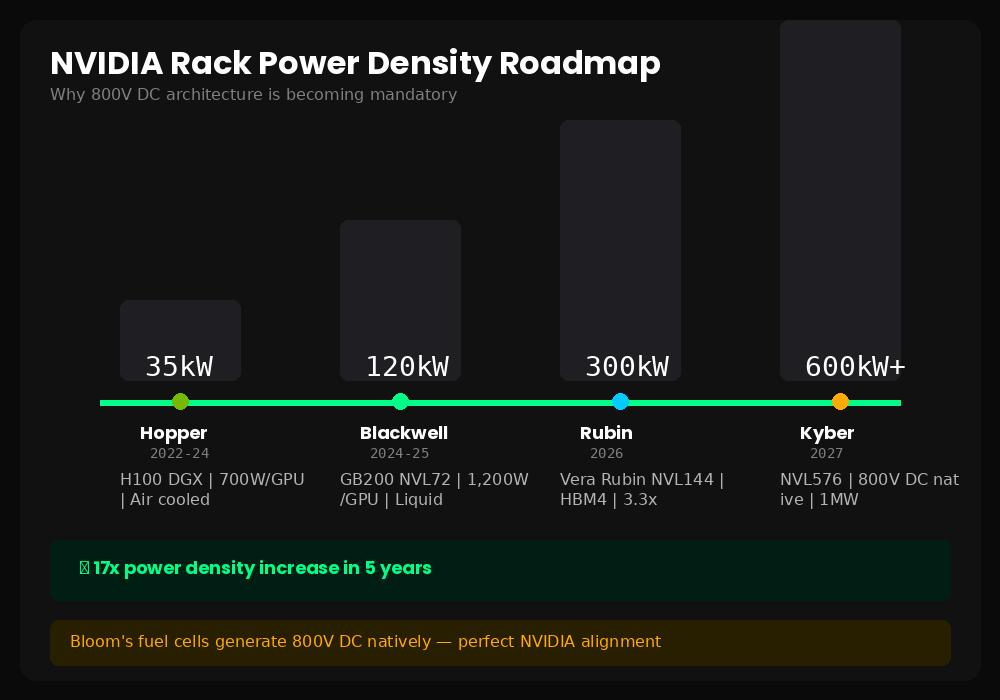

NVIDIA’s next-generation AI racks are going to change everything about datacenter power.

Today’s Blackwell systems draw maybe 120kW per rack. The upcoming Rubin and Feynman generations? 600kW to over 1 megawatt. Per rack.

You can’t deliver a megawatt at 48 volts—that’s what current datacenters run. The amperage would be insane. You’d lose massive amounts of power to resistance. The copper alone would be uneconomical.

So the industry is moving to 800-volt DC distribution. NVIDIA published a whole whitepaper on this at OCP in October. It’s not a maybe—it’s their stated architecture for 2027.

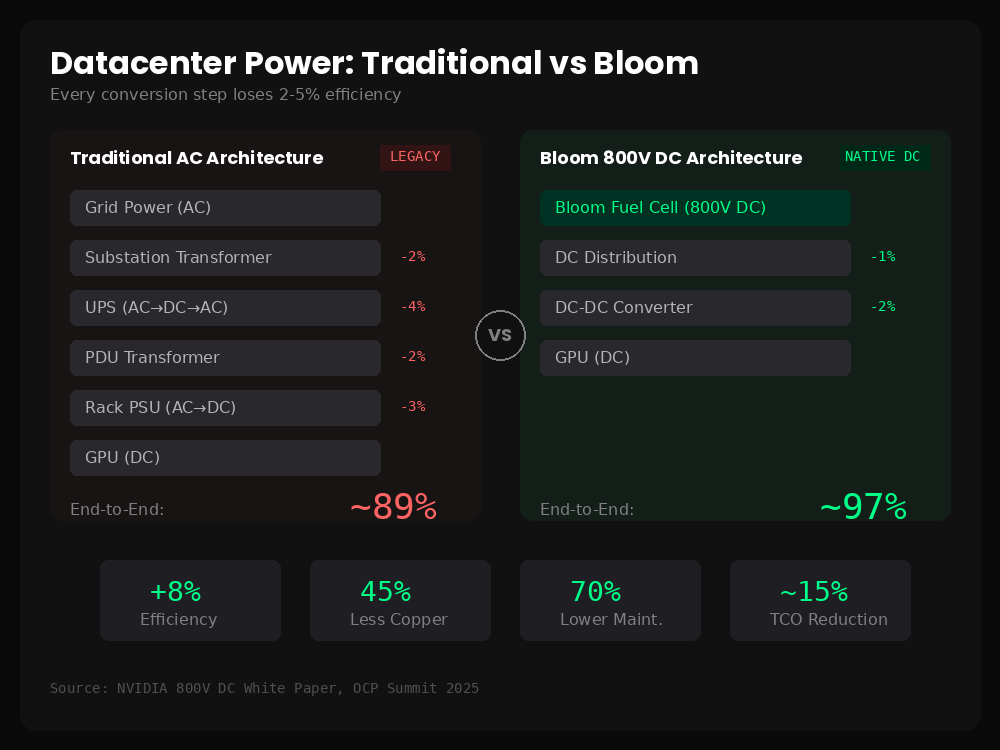

Here’s what makes Bloom interesting: their fuel cells output 800-volt DC natively. No conversion required. Every other generation technology—gas turbines, nuclear, coal—produces AC power that has to be transformed and rectified multiple times before it reaches the server.

Each conversion loses efficiency. By the time grid AC becomes rack-level DC in a traditional datacenter, you’ve lost 10-12% of your electricity. With Bloom feeding 800V DC directly to 800V DC racks? Maybe 3%.

That efficiency delta, combined with the smaller building footprint (no massive transformer yards, no redundant switchgear), gets you to roughly 15% lower total cost of ownership. For a hyperscaler, 15% TCO reduction on their power bill isn’t incremental. It’s strategic.

Crusoe Is The Proof Point

Let me be specific about what’s happening in Wyoming.

Crusoe is building an AI datacenter campus near Cheyenne with Tallgrass Energy. The site is designed for 1.8GW initially, expandable to 10GW. It’s one of the largest AI infrastructure projects announced in the US.

The air permit filed in September requests approval for up to 900MW of “Bloom Energy ES-6.5 Generation Units.” The filing specifies emission calculations for the fuel cells. It’s not vague—it names the exact product.

Now, I want to be clear about what this is and isn’t. It’s a permit application, not a purchase order. Developers file for maximum capacity and build in phases. Bank of America put out a note emphasizing this distinction, and they’re right.

But here’s what the skeptics miss: you don’t file a 900MW air permit for a technology you’re not serious about deploying. Permitting takes 6-12 months. Construction planning runs in parallel. Crusoe isn’t filing this paperwork for fun.

If even half of that permitted capacity gets deployed, it’s transformational for Bloom. Their total installed base today is around 1.3GW. Crusoe alone could add 450MW or more.

Brookfield Changes The Risk Profile

The Crusoe filing got the stock moving in September. The Brookfield deal in October confirmed it wasn’t a one-off.

Brookfield manages over a trillion dollars. They’ve deployed $100 billion in digital infrastructure. When they announce a “$5 billion strategic AI infrastructure partnership” and name Bloom their preferred onsite power provider, that’s not a press release partnership. That’s a procurement decision.

Sikander Rashid, their Global Head of AI Infrastructure, said it directly: “Behind-the-meter power solutions are essential to closing the grid gap for AI factories.”

The first European deployment is expected before year-end. Bloom’s CEO suggested this is just phase one—Brookfield has “$50 billion in AI opportunities and is tripling the size of its AI strategy over the next three years.”

Add Brookfield to the customer list that already includes American Electric Power (up to 1GW), Equinix (100+ MW across 19 datacenters), and Oracle. These aren’t pilot projects. They’re infrastructure decisions by sophisticated buyers who’ve done the math.

The Sora Problem (And Opportunity)

Here’s what’s about to make the power shortage much worse: video.

OpenAI’s Sora hit 1 million downloads faster than ChatGPT did. That’s not a typo. A video generation tool, available only by invitation due to compute constraints, outpaced the most viral AI product in history.

Why does this matter for power? Because video generation is enormously compute-intensive. Generating two 6-second Sora clips consumes roughly as much compute as a developer using AI coding tools for an entire day.

Hugging Face quantified this in September: AI video generation energy demands quadruple when video length doubles. It’s non-linear scaling in the most power-hungry direction possible.

Today, maybe a million developers use generative AI tools regularly. Three billion people use video-centric social platforms. When TikTok, Instagram, and YouTube roll out their Sora competitors—and they will—compute demand explodes. And with it, power demand.

The hyperscalers know this is coming. It’s why they’re desperate to deploy capacity now, before the video wave hits.

Q3 Showed The Model Works

Bloom just reported their strongest quarter ever. $519 million in revenue, up 57% year-over-year, crushing estimates of $425 million.

More importantly, the unit economics are improving. Gross margins expanded 540 basis points to 29.2%. Non-GAAP operating income hit $46 million, up 469% from the prior year. They even squeaked out positive GAAP operating income—$7.8 million versus a loss last year.

The balance sheet is solid: $627 million in cash, plus a $2.2 billion convertible note offering completed at zero coupon. They have the capital to scale manufacturing to 2GW annual capacity by end of 2026.

This is a company executing, not a concept waiting to prove itself.

What The Bears Get Wrong

The bear case has some valid points. Bloom trades at a nosebleed valuation—500+ trailing P/E. They’ve accumulated $3.9 billion in losses over their history. The fuel cells run on natural gas, which creates ESG complications (Amazon canceled some Oregon deployments over this). Bank of America has a $39 target, implying 60% downside.

But the bears are fighting the last war. Their thesis was “nobody will actually deploy this at scale.” That thesis is dying in real-time. The permits are filed. The partnerships are signed. The revenue is shipping.

The new debate isn’t whether Bloom gets deployed—it’s whether they can manufacture fast enough to meet demand, and whether the premium pricing holds as the market matures. Those are execution questions, not existential ones.

At 13x my 2027 EPS estimate of ~$8, with earnings compounding at 35%+ annually in my base case, I think the risk/reward still favors longs even after the run.

Price Targets

I’m modeling three scenarios:

Base case: Bloom captures meaningful share of the behind-the-meter AI power market. Manufacturing scales to plan. Gross margins continue expanding as the cost-down roadmap executes. 2027 EPS around $8, 2028 around $10.70.

Bull case: The 800V DC architecture becomes industry standard faster than expected. Crusoe and Brookfield deployments accelerate and expand. Additional hyperscaler wins. 2027 revenue north of $7 billion.

Bear case: AI demand growth disappoints. Gas turbines win on cost. Bloom’s capacity expansion hits snags. Stock retreats to $55-80 range.

TimeframeTarget1-Year (YE 2026)$200+5-Year Upside$600+Downside Risk$55-80

The Bigger Picture

Every major AI scaling bottleneck has minted fortunes for whoever solved it.

The CPU-to-GPU transition created $3 trillion+ in market cap for NVIDIA and AMD. The memory bandwidth constraint created $500 billion+ for Hynix, Micron, and Samsung through HBM. The cooling problem created $200 billion+ for Vertiv and Schneider.

Power is the current constraint. It’s arguably the hardest one to solve because the infrastructure was built on a completely different timescale than AI is evolving. And Bloom is one of the very few companies with a deployable solution that works within the physics and economics of where datacenters are headed.

At ~$20 billion market cap, they’re a fraction of what other bottleneck-solvers have achieved. If they execute, the upside is multiples of current prices, not percentages.

Bottom Line

The market still thinks Bloom Energy is a “what if” story. They’re looking at the valuation multiples on trailing numbers and calling it expensive. They’re comparing fuel cell costs to turbine costs on a $/kW basis and calling it uneconomic.

They’re missing that the game has changed. The hyperscalers aren’t optimizing for lowest cost per megawatt anymore. They’re optimizing for fastest path to deployable power. They’re paying for speed, for permitting simplicity, for architectural alignment with where NVIDIA is taking the industry.

Bloom is getting deployed. The evidence is piling up in SEC filings and state permit databases, not just press releases. The revenue is growing 57% and accelerating. The customers are sophisticated infrastructure investors who don’t make $5 billion commitments on hype.

I think this is one of the best risk/rewards in AI infrastructure right now.

Disclosure: I hold a position in BE. This is investment research, not advice. Do your own work.

About the Author

Ben Pouladian is a Los Angeles-based tech investor and entrepreneur focused on AI infrastructure, semiconductors, an

d the power systems enabling the next generation of compute. He was co-founder of Deco Lighting (2005–2019), where he helped build one of the leading commercial LED lighting manufacturers in North America. Ben holds an electrical engineering degree from UC San Diego and has spent two decades building and investing in technology companies.

He currently serves on the Terasaki Institute Leadership Board and is a member of YPO (Young Presidents’ Organization). His investment research focuses on AI datacenter infrastructure, GPU computing, and the semiconductor supply chain.

Follow on Twitter/X: @benitoz More at benpouladian.com

Agree with a lot of what you are saying. I may not be a professional investor, but if you want to read the thoughts of someone who has followed Bloom longer than most -

https://outspokengeek.substack.com/p/the-implausible-bloom-of-an-energy

Well done on the write up. Great timing.